Have pollsters failed? Only if their goal was accuracy

Extracting the truth from a flood of narratives

TLDR:

-Polls are skewed, largely by design

-A majority of polls are commissioned specifically to skew aggregate results

-This tells us far more about those who seek to control the narrative than it does about the actual election outlook, and the problem is magnified [on purpose] in our current era of censorship

Perhaps we should be less focused on a poll's results, and more focused on a poll's purpose

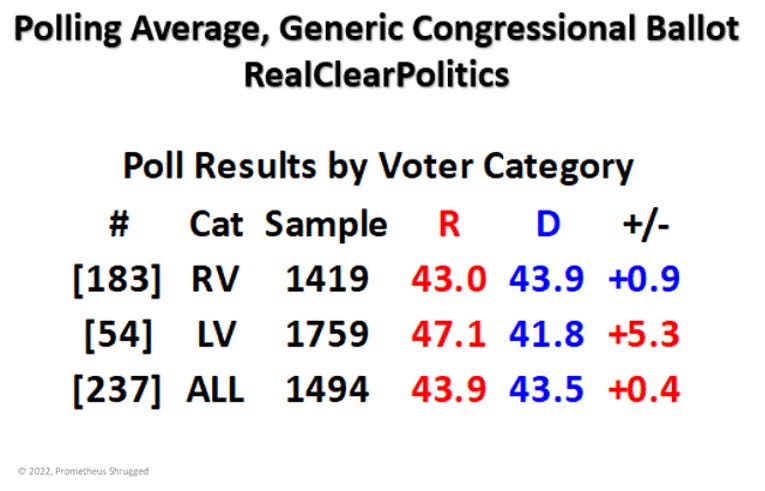

The image above depicts polling results from 237 separate polls that asked the question [with slight variations] ‘Which party’s candidates are you most likely to vote for, if an election were held today?’ 237 is the sum of all such polls since the beginning of August 2021, as compiled by RealClearPolitics; I chose that particular cutoff point because it would cover everything from just prior to the American withdrawal from Afghanistan [when the Biden administration’s approval numbers first began to sink] up to the present.

I compiled and analyzed these poll results because they provide a stark example of how a single data-set can be [and often is] manipulated to suit a particular narrative purpose - which has been taken to historic extremes since the start of the COVID-19 pandemic.

Readers of Prometheus Shrugged - especially those who’ve been around for more than a few months - should be familiar with the argument that censorship and narrative control have been applied during this pandemic to an unprecedented degree. As an American, these efforts have been so unconstitutional and so broad that they literally became my impetus for moving to Substack in February 2021 - a transition that started when FaceBook rejected the following image because it went against their standards by “inciting violence:”

-That article was originally a FaceBook post [retroactively placed here on Substack].

Ever since early 2020, when my very first pandemic commentaries were being written, I’ve sought to highlight the bizarre disconnect between published data/analyses and what the actual conclusions should’ve been:

Which brings us to the dichotomous reporting on polling data, and why it’s important to understand the difference between registered voters [RV] and likely voters [LV]. Per polling organization Gallup:

Here, Gallup points out the obvious - that polls based on registered voters will be less accurate than polls based on likely voters - because likely voters are more likely to vote.

It’s important to clarify that I added the bold accents to that last statement on purpose - not to insult my readers’ intelligence, but to highlight that this, in essence, is exactly what many news organizations are doing when they drive entire news cycles on the basis of polling data that they know are flawed at best.

Comparing RV & LV

The first odd data point is the overwhelming preponderance of RV-based polls [183 to 54], despite the known weaknesses in RV data as accurate predictors of election outcomes. I don’t have enough time to point out all of the historical evidence that supports this conclusion, so we’ll take Gallup’s word for it at the moment.

Next, let’s consider what the trends look like when separated [RV first, then LV]:

Both charts depict rolling averages, of 5 or 3 polls, respectively [due to the disparity in total number of polls], but the overall conclusion is that the 6.2% difference in polling average that I showed in the very beginning represents a very different baseline in voters’ party preference between RV and LV, at any given point on the timeline. Given that LV data will always be expected to be closer to reality, the best way to explain the impact of over-loading the data with RV samples is simply to say that the aggregate average will be skewed towards the partisan leanings professed by all registered voters - with the added proviso that not at states require registered voters to profess a party preference, even when voting in partisan primaries.

The current RealClearPolitics generic congressional polling chart would look very different if the lines were shifted to account for the massive difference between whatever RV datasets are being used when selecting prospective voters to be polled. Comparing the following chart to the ones above makes the impact on the overall average obvious:

Size Matters

Returning to the overall numbers introduced at the start of this article, there’s another outlier that sticks out - the average sample size for each of the two categories:

When I calculated the correlations between each data point [shown in a moment], the size of the sample in a given poll wasn’t a major factor, but there’s more than meets the eye, in my opinion. Among all LV polls, there were only 4 in which Democrats earned a greater share of support, and only poll had a tied result:

Moreover, ALL 3 Democratic-leaning LV polls that published their sample size were within the 6-smallest of 51 LV samples [and the 4th was 1 of the 3 unpublished-sample polls]:

In other words, as a pollster’s random sample of voters grew larger and more likely to vote, the shift towards a preference for Republican candidates was significant, and this shift remained largely consistent throughout the 14-month period.*

[*the very first dataset went all the way back to February of 2021 but only added 4 additional polls, which were excluded from the deeper calculations; the “ord” column numbering began in Feb]

Cui Bono?

The logical thing for any reader to do at this point would be to ask why? - Why would any polling firm - or any organization paying for a poll - intentionally choose a methodology that they know will produce inaccurate/skewed results?

After all, the constant theme we’ve heard after each of the last few election cycles has been that polls overestimated the Democratic share of the actual electorate [except in 2018, when there was a major ‘wave’ for Democrats at the mid-term point for President Trump], or that various other mysterious factors must exist that explain who actually votes. In the aftermath of the 2020 election, Scientific American managed to blame everything but the relationship between RV’s and LV’s.

Yet somehow, the 2022 election approaches and very little has actually changed amongst pollsters, despite a decade’s-worth of statistical dumpster fires that should’ve produced a tangible amount of soul-searching and formula-tweaking by now.

There is, however, a stunningly obvious answer:

The overwhelming majority of modern polling isn’t conducted in order to provide an accurate picture of what to expect in upcoming elections; the primary purpose of most polling is to OBSCURE the truth and SHAPE the public’s perceptions about the state of politics in the United States.

I’m not concerned about readers collapsing in shock at that statement, of course. The shift towards dueling narratives in our modern media environment is obvious to anyone who has reached middle school [my 8th-grade son has confirmed this].

What IS surprising is that this narrative control remains effective - especially since early 2020, when censorship truly became a weapon of the largely left-leaning ‘establishment.’ This has exacerbated the impact of all narrative efforts, by reducing the public’s access to information that conflicts with the ‘accepted’ truth - using ‘the science’ as a Trojan horse.

[I don’t make such claims lightly - I do so having spent two years chronicling facets of that censorship. Here’s a handful of my articles on this topic, from the last year: The Sword of D’Omicron, Manufactured Consensus, The Architects of COVID-19 Consensuship, Anti-Science - The Guardians of the Fallacy, The Sound of Science]

Sometimes, trends can be explained by innocent ignorance, but we can probably rule out at least part of the polling results from such innocence. I added gray highlights to each poll result that differed from LV results by more than 8 percentage points within 48 hours before or after the LV results were published. All but 1 such poll was RV-based, and most of them came from a single pollster:

The most common question I’ve heard others ask as I’ve investigated the origin of the COVID-19 pandemic is “cui bono” - ‘who benefits?’

It’s time to look beyond mere partisanship to answer that question; however, my focus remains on the answer to the opposite question - who loses?

When it comes to censorship, the answer is

all of us

~Rixey

P.S. For the curious…

It’s kind of funny watching the rest of society suddenly forced to deal with what us pro-gun people have been dealing with for decades.

Lying media

Censorship/deplatforming

Targeted law enforcement and trumped up charges

Skewed polls

Political hostility

Etc etc etc…

“Welcome to the party pal.”

It might be said too...such polls could be used to "prove" that the race was tight, in the event extraordinary circumstances require "saving" Democracy.